Goede AI moet logisch kunnen redeneren

Nieuwe EU-regels moeten de inzet van AI aan banden leggen, en toewerken naar meer betrouwbare AI-systemen. Bart Verheij, hoogleraar AI & Argumentatie van de Rijksuniversiteit Groningen, vindt dat betrouwbare AI in staat zou moeten zijn tot logisch redeneren. Dan zou een AI-systeem zichzelf kunnen verantwoorden en laten bijsturen waar nodig.

FSE Science Newsroom | Charlotte Vlek

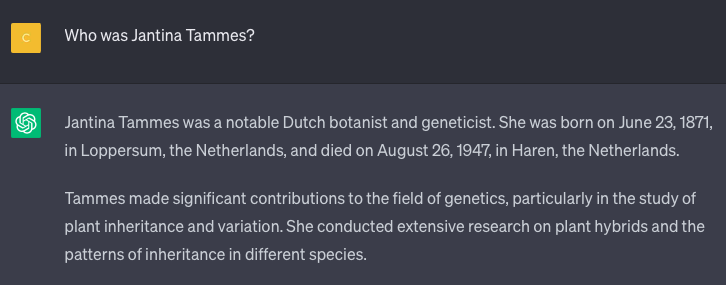

Hallucineren is een hardnekkige kwaal onder AI-systemen: er rolt een heel zelfverzekerd antwoord uit dat grammaticaal correct is, er mooi uit ziet, maar nergens op slaat. Zo gaf ChatGPT bijvoorbeeld op de vraag ‘Wie was Jantina Tammes?’ een reactie die er in eerste instantie goed uit ziet, maar waarin vermeld staat dat ze geboren is in Loppersum (niet correct) en gestorven in Haren (ook niet correct). En in de VS gebruikte een advocaat ChatGPT voor zijn pleidooi in een rechtszaak tegen een vliegmaatschappij, maar dat pleidooi bleek vol verzonnen bronnen. De rechter was not amused.

(tekst gaat verder onder afbeelding)

Machine Learning

ChatGPT maakt gebruik van Machine Learning. Dat is een populaire methode in de AI waarbij een computer getraind wordt op een enorme hoeveelheid data, om op basis daarvan een concrete taak te leren uitvoeren. Dit is in essentie een kwestie van statistiek: op basis van vele voorbeelden kan de computer bij een nieuwe opdracht de meest waarschijnlijke reactie produceren.

In het geval van ChatGPT is het doel van de training geweest: produceer in een gesprek steeds het meest waarschijnlijke volgende woord. Niet zo gek dus dat ChatGPT wel eens wat feiten bij elkaar hallucineert: ChatGPT ‘weet’ helemaal niets over Jantina Tammes, maar produceert alleen wat veel voorkomt in de datasets waarop het getraind is: blijkbaar zijn in deze context Loppersum en Haren kansrijke woorden.

Verheij: ‘Maar soms blijkt zo’n taalmodel ineens best goed te zijn in taken waarvoor het niet is getraind, zoals optellen en aftrekken. En soms komt ChatGPT ineens met een exacte redenering, terwijl het op andere momenten niet tot logisch redeneren in staat is en onzin uitkraamt. Niemand begrijpt precies wanneer en waarom. En dat maakt zo’n systeem onbetrouwbaar.’

Kennis en data

Verheij herkent binnen de AI twee belangrijke stromingen: de kennissystemen en datasystemen. Een kennissysteem werkt op basis van logica: daar stop je kennis en regels in, en het geeft je altijd een correct antwoord, indien gewenst met uitleg. Dit soort systemen worden van de grond af door mensen gebouwd. Datasystemen werken met enorme datasets, en maken daar zelf chocola van. Bijvoorbeeld met gebruik van Machine Learning.

Juist de moderne AI is slecht in zichzelf verklaren

Promovendus Cor Steging onderzocht onder supervisie van Verheij hoe Machine Learning omgaat met dingen als regels en logica. Steging gebruikte hiervoor een regel uit het Nederlands recht, die vastlegt wat en wanneer iets precies geldt als een zogenaamde onrechtmatige daad. Daarmee genereerde hij de ‘perfecte dataset’ van voorbeelden, en bekeek wat een computer vervolgens uit die dataset destilleert.

Het computerprogramma kon na training op deze ‘perfecte dataset’ met hoge nauwkeurigheid aangeven of iets wel of niet onrechtmatig was. Tot zover niet verrassend. Maar het programma bleek níet de juiste onderliggende regels uit de dataset te hebben geleerd. Verheij: ‘Met name de exacte combinatie van logische voorwaarden die in het recht nodig is lukt niet goed. Ook worden grenswaarden (zoals een leeftijdgrens) niet correct gevonden.’

‘Juist de moderne AI, die zo krachtig is, is slecht in zichzelf verklaren. Het is een black box.’ En dat moet anders, volgens Verheij. Daarom wordt in Groningen gewerkt aan computationele argumentatie. ‘Het zou mooi zijn als mens en machine elkaar in een kritische gesprek kunnen ondersteunen. Want alleen de mens snapt de mensenwereld en alleen de machine kan zo snel zo veel informatie verwerken.’

Meer nieuws

-

01 juni 2026

Materiaal met eigenschappen bepaald door structuur