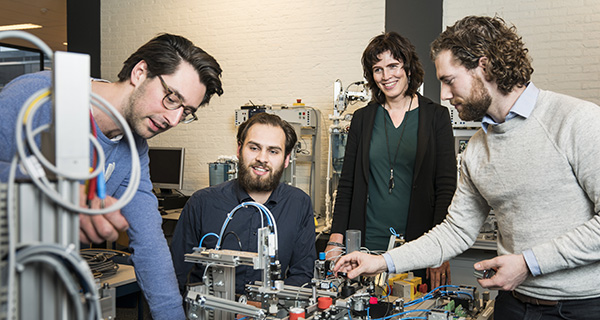

Maatschappij/bedrijven

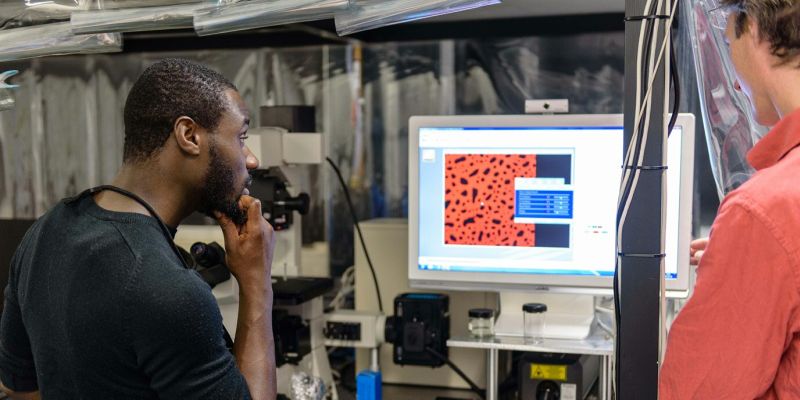

De universiteit is er voor iedereen. Wetenschappers dragen bij aan de oplossing van maatschappelijke vraagstukken door onderzoek te doen voor bedrijven of organisaties. Ontdek wat de universiteit kan betekenen voor uw bedrijf of voor u persoonlijk.