Het antwoord op de filterbubbel? Artificial Intelligence!

De berichtgeving rondom het coronavirus heeft nog maar weer eens duidelijk gemaakt dat we de afgelopen jaren steeds meer in onze eigen filterbubbel zijn gaan leven. Omdat algoritmes op sociale media onze newsfeed bepalen en ‘klassieke media’ ook op personalisering inzetten, krijgt iedereen alleen maar informatie te zien die zijn of haar wereldbeeld bevestigt, wat tot meer polarisatie in de samenleving zou leiden. De Groningse prof. dr. Computationele Semantiek Tommaso Caselli en het team Data Science van het Centrum voor Informatie Technologie (CIT) willen onze filterbubbel doorbreken – met behulp van… een algoritme.

Tekst: Jorn Lelong

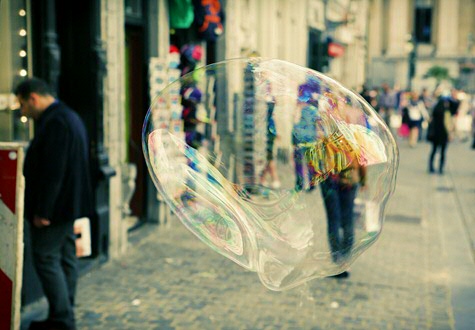

Wanneer we het over filterbubbels hebben, denken we spontaan aan sociale media. Daar bepalen algoritmes welke berichten we te zien krijgen, afhankelijk van pagina’s die wij, of onze vrienden, leuk vinden. Zo creëren Facebook, Instagram en Twitter voor ieder van ons een gepersonaliseerde luchtbel met nieuws en informatie die in ons straatje past.

Breaking filter bubbles

Maar die personalisatie beperkt zich niet tot sociale media. Denk maar aan Blendle, dat artikelen aanraadt op basis van interesses of eerder gelezen artikelen. Ook ‘traditionele’ media experimenteren sinds enkele jaren meer en meer met bijvoorbeeld gepersonaliseerde nieuwsbrieven, in een poging om lezers bij zich te houden. Wat heeft dat voor gevolgen voor de manier waarop we over de wereld lezen? Daar wil assistent-professor Tommaso Caselli van de Rijksuniversiteit Groningen met zijn project ‘Breaking filter bubbles’ beter inzicht in krijgen. Uniek aan het project is de interdisciplinaire samenwerking. Caselli betrekt niet alleen Marcel Broersma, hoogleraar Media en Journalistieke Cultuur in het project, hij kan ook op de hulp rekenen van team Data Science van het CIT.

Een corpulent corpus

Als assistent-professor in computationele taalkunde, houdt Caselli zich al langer bezig met het ontwikkelen van algoritmes om taalkundige verschijnselen te analyseren. “Bij een eerder project als postdoc aan de VU Amsterdam bekeek ik hoe tien verschillende bronnen hetzelfde verhaal vertelden. Dat project wilde ik verder uitwerken met nieuwsberichten, maar dan op grote schaal.” Dat kan je vrij letterlijk nemen. Data scientist Dimitrios Soudis van het CIT kan zich uitleven met een New York Times-archief van twee decennia. Om het enigszins behapbaar te maken, beperken ze zich voorlopig tot nieuwsartikelen over natuurrampen. “Dat zijn relatief simpele verhalen”, zegt Caselli. “Later willen we ook misdaad- en politieke berichtgeving bekijken, maar dan moeten we eerst een duidelijk beeld krijgen van hoe nieuwsverhalen in elkaar zitten.” Manueel alle berichtgeving over natuurrampen halen uit het enorme archief van de New York Times is natuurlijk een onbegonnen taak. Daarvoor doet Soudis beroep op zijn expertise in Artificial Intelligence (AI). “We gaan naar de NY Times-website en zoeken bijvoorbeeld artikelen in de categorie ‘aardbevingen’. Die artikelen downloaden we, en aan de hand van een wiskundig model berekenen we welke artikelen uit ons corpus daarmee overeenkomen.”

Taal blijft lastig

Tot zover het verzamelen van de artikelen. Volgens Soudis ligt de echte moeilijkheid van het project in het feit dat taal geen exacte wetenschap is. “Taal blijft moeilijk te begrijpen voor algoritmes. Denk alleen al aan hoe vaak woorden als wervelwind, tsunami of aardverschuiving in figuurlijke zin gebruikt worden. Die moeten we er dus uit filteren.” Voorlopig zijn computers nog niet in staat om taal op een dieper niveau te begrijpen. Dus moet je als data scientist creatief zijn. Dimitrios Soudis bedacht om met frequenties te werken. “We creëren een soort woordenboek met termen die gerelateerd zijn aan natuurrampen. Dan kijken we hoe vaak bepaalde woorden in de geselecteerde artikelen voorkomen. En nog belangrijker: met welke woorden ze in verband staan. Zo kunnen we syntactische relaties tussen woorden ontrafelen.”

Patronen reconstrueren

Dit laat hen toe om nieuwsberichten op een nieuwe manier te bekijken. In plaats van te kijken naar hoe een gebeurtenis verteld wordt in losse artikelen, kijken Caselli en Soudis naar welke onderliggende patronen in alle nieuwsberichten over natuurrampen terugkomen. “Los van de individuele stijl van de journalist, worden nieuwsberichten geschreven volgens bepaalde sjablonen. Bij een aardbeving heb je het bijvoorbeeld over de grootte, de plaats waar het gebeurde en het aantal slachtoffers, of hoe de hulptroepen in actie kwamen. Dat proberen wij te reconstrueren.”

Van klein naar groot

Als dat model eenmaal werkt, is het volgens Caselli zaak om het corpus te verbreden. “Je moet klein beginnen, maar uiteindelijk willen we kijken hoe andere media verslag doen van dezelfde gebeurtenis. Welke bronnen ze aan het woord laten, welke informatie ze eerst geven of eventueel weglaten. We kunnen bijvoorbeeld het verschil tussen tabloid- en klassieke media meenemen in ons onderzoek, net als sociale media.”

Google News 2.0

Helemaal een einde maken aan filterbubbels lijkt Caselli een utopie. “Mensen blijven altijd naar de nieuwsmedia gaan die ze vertrouwen. Filterbubbels creëer je dus ook zelf. Maar je moet mensen de mogelijkheid geven om het complete plaatje te krijgen en zelf een keuze te maken. Wat wij dus willen is een overzicht bieden: dit zijn de feiten, en deze verschillende perspectieven houden de media er op na. Een soort Google News 2.0, zou je kunnen zeggen.”

Meer informatie

Meer nieuws

-

11 maart 2026

Japanse onderscheiding voor RUG-hoogleraar Janny de Jong

-

04 maart 2026

Hoe sluit je écht vrede na oorlog?